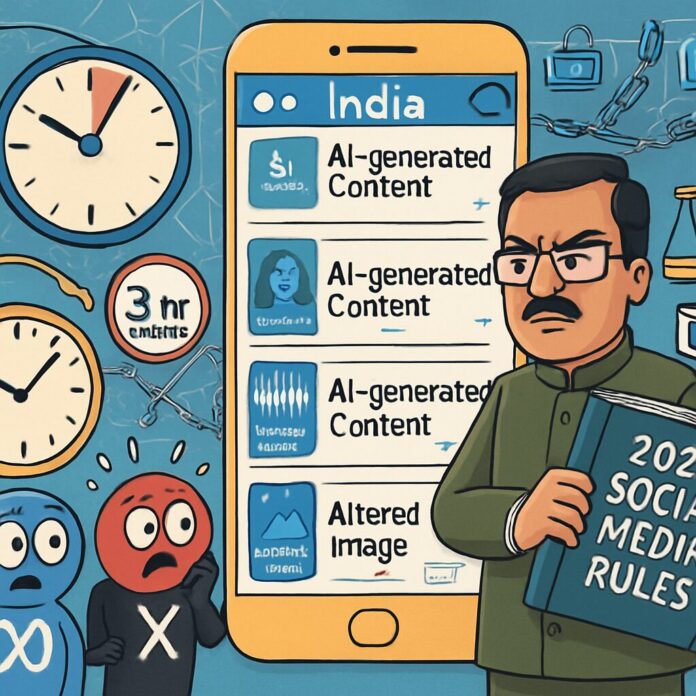

India has introduced stringent new social media regulations requiring platforms to label AI-generated content and comply with takedown requests within three hours, sparking widespread debate over digital governance and free speech.

On February 10, 2026, the Indian government notified amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, through gazette notification G.S.R. 120(E). These changes formally bring synthetically generated information (SGI)—including deepfake videos, synthetic audio, and altered visuals—under a regulatory framework for the first time. The rules mandate that social media intermediaries like Meta, Google’s YouTube, and X must ensure all AI-generated content is prominently labelled so users can instantly identify it.

A critical aspect of the amendments is the requirement for platforms to embed persistent metadata and unique identifiers in AI content, enabling traceability to its origin. However, the government has exempted routine editing such as colour correction or noise reduction, as well as research materials, from labelling obligations. This nuanced approach aims to target deceptive content without stifling legitimate uses of AI tools.

Perhaps the most contentious change is the drastic reduction in compliance timelines. Platforms now have just three hours to act on certain lawful orders from authorities, a sharp drop from the previous 36-hour window. Other deadlines have also been tightened: the 15-day period for some actions is now seven days, and the 24-hour timeline has been halved to 12 hours. These accelerated takedown requirements are designed to curb the rapid spread of harmful content, particularly in emergencies or during elections.

The move is part of India’s broader crackdown on online misinformation and deepfakes, which have raised alarms about national security and individual privacy. By linking synthetic content to criminal laws like the Bharatiya Nyaya Sanhita and the POCSO Act, the government underscores the seriousness of violations involving child abuse material or identity theft. Platforms must also warn users every three months about penalties for misusing AI-generated content.

Reactions to the new rules have been mixed. Digital rights groups have criticized the tight deadlines, warning they could lead to arbitrary censorship and undermine free expression. Tech companies, represented by bodies like the Internet and Mobile Association of India, had successfully lobbied against an earlier proposal that required watermarks covering 10% of screen area, arguing it was impractical. The final rules mandate “prominent labelling” instead, offering more flexibility but still posing implementation challenges.

The regulations will take effect on February 20, 2026, giving social media platforms a brief period to adjust their systems. While the government has assured intermediaries that compliance will not affect their safe harbor protections under Section 79 of the IT Act, the changes are likely to strain resources and test the limits of automated moderation technologies.

This development positions India at the forefront of global efforts to regulate AI on social media, setting a precedent that other nations may follow. As platforms grapple with these new obligations, the balance between combating misinformation and preserving open discourse will remain a key point of contention in the world’s largest democracy.